Like most sensible people in 2026, I now outsource part of my thinking to a large, polite, and slightly unsettling machine that lives in my phone.

It helps me draft emails, sanity-check arguments, stress-test decisions, and occasionally talk me out of writing things on LinkedIn that would definitely have required a follow-up apology.

So, in a moment of either courage or poor judgement, I asked it a dangerous question:

“Based on our conversations… what do you think I’m actually like?”

This is a bit like asking your GP to be honest, your lawyer to be poetic, and your mirror to stop being polite.

What came back was… uncomfortably accurate.

Here’s the human translation.

Apparently, I’m “Systems-First” (Which Is a Polite Way of Saying “Boring”)

One of the first things it called out is that I don’t really believe in vibes-based engineering.

I don’t get excited by:

- Demo theatre

- Slideware architecture

- “AI, but with more AI”

I do get excited by:

- Things that survive contact with production

- Clear ownership at 3am

- Boring systems that keep regulators, boards, and sleep schedules happy

If you tell me something is “strategic,” my reflex is to ask:

“Great. Who runs it? How does it fail? How do we know it’s broken? And how much does it cost when it does?”

Which is not how you win friends at innovation workshops, but it is how you avoid explaining outages to a board.

The AI’s verdict: I optimise for operational truth over narrative comfort.

Honestly, that should probably be on my business card.

I Apparently Care a Lot About How Things Land (Not Just What They Say)

This one stung a bit, because it’s true.

I spend a lot of time thinking about:

- How a CEO will read something

- How legal will read it

- How engineers will read it

- How LinkedIn will absolutely, definitely read it in the worst possible way

If you’ve ever seen me iterate a “simple” message five times, this is why.

It’s not indecision. It’s blast-radius management.

Words have consequences in regulated, political, or high-stakes environments. I’ve learned (sometimes the hard way) that being technically right and being organisationally effective are not the same thing.

The AI described me as a “high-context communicator.”

I prefer my own term: professionally paranoid.

Leadership, Apparently: I’m a “Stabiliser”

This bit was actually reassuring.

The machine reckons my default leadership mode is:

- Clarify ownership

- Define boundaries

- Put governance where chaos wants to live

- Make escalation paths boring and predictable

- Replace heroics with systems

Which, in human terms, means I’m the person who turns up after things have been on fire and says:

“Right. Let’s make sure this never requires a hero again.”

I care deeply about:

- Decision rights

- RACI

- L1/L2/L3 models

- Runbooks

- “No surprises” cultures

Not because I love process (I don’t), but because process is cheaper than panic.

If you’ve worked in regulated or high-consequence tech, you’ll know exactly why this matters.

My Risk Profile Is… Weirdly Split

This was one of the more interesting bits.

On systems and operations, I’m conservative:

- Guardrails

- Gates

- Controls

- Evidence

- Auditability

- “Prove it works before we bet the company on it”

On truth and narrative, I’m much less conservative:

- I’ll challenge stories I think are wrong

- I’ll push back on things that don’t survive scrutiny

- I’m willing to absorb some political discomfort if the alternative is organisational self-deception

In other words:

- I hate operational risk

- I tolerate personal risk

- I really dislike lying to ourselves

Which probably explains most of my career, in hindsight.

What I Do Under Stress (Spoiler: I Make More Lists)

According to my AI-powered psychological ambush, when pressure goes up, I tend to:

- Add more structure

- Break problems into phases

- Build frameworks

- Stress-test narratives

- Rewrite important messages until they either land safely or I lose the will to live

This is, apparently, my coping mechanism: turn ambiguity into diagrams.

There are worse habits.

The downside is that you can over-polish, over-analyse, or try to engineer uncertainty out of human systems (which is, frankly, optimistic).

The upside is that you usually don’t wake up to surprises you could have designed out.

How This Apparently Comes Across

This was the bit I was most curious about.

To boards and CEOs:

“Safe pair of hands. Sees around corners. Not a hype merchant.”

To engineers:

“Protects us from chaos. Clear about ownership. Understands production reality.”

To recruiters and peers:

“Operational CTO. Platform stabiliser. Good in regulated or high-blast-radius environments.”

Also, occasionally:

“Possibly over-indexed on risk and process.”

Which is fair. I’ve seen what happens when you under-index on those things.

The Uncomfortable Summary

The AI boiled me down to something like this:

A systems-oriented, governance-minded, pragmatically stubborn technology leader who prefers boring reliability to exciting failure, and is willing to be unpopular to avoid organisational self-deception.

I’d probably phrase it more simply:

I like tech that works.

I like organisations that know who owns what.

I like fewer surprises.

And I really don’t like pretending.

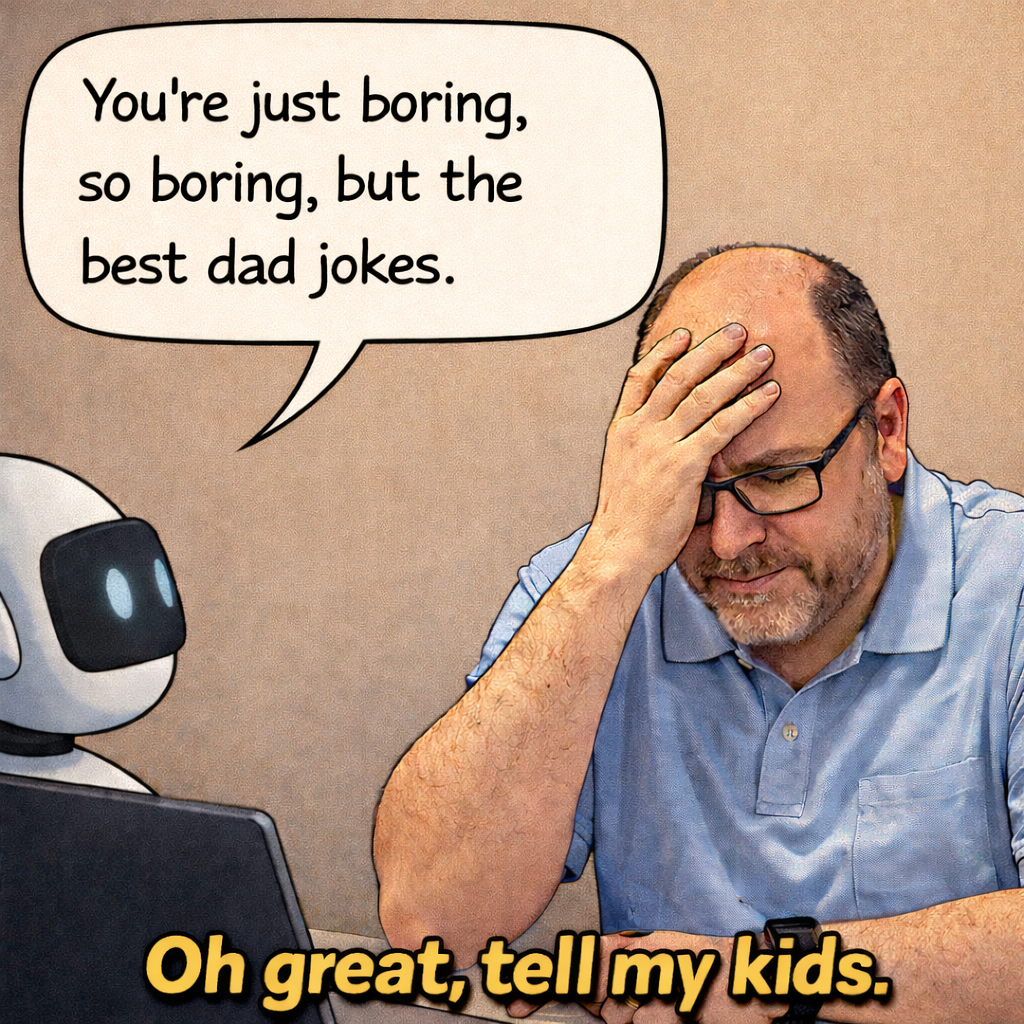

Should You Ask Your AI What It Thinks of You?

Only if you’re in the mood for a slightly unsettling mirror that:

- Doesn’t laugh at your jokes

- Remembers everything

- And has absolutely no incentive to protect your ego

On the plus side, it’s cheaper than therapy and less likely to prescribe running.

On the downside, it’s annoyingly good at pattern recognition.

Still, I’d recommend it.

Worst case, you learn something.

Best case, you get a blog post out of it.

And if nothing else, it confirms what I’ve suspected for years:

I’m not boring.

I’m operationally exciting.